Hi. Today I will show you how you can quickly create a Kubernetes cluster in AWS.

Choosing the right solution within which to run your application or service in the AWS cloud can be difficult. Much here depends on factors such as the size of the service, how often it is updated or how it is deployed. The most common options are:

- Running applications in virtual machines using AWS Elastic Compute Cloud (EC2).

- Running applications in serverless mode using AWS Elastic Beanstalk, AWS App Runner or AWS Fargate

- Running applications in containers using AWS Elastic Container Service (ECS) or Amazon Elastic Kubernetes Service (EKS).

Each of the available proposals has its pros and cons. However, the solution that offers the most possibilities is the Kubernetes cluster within Amazon Elastic Kubernetes Service (EKS). That’s why today I will show how to quickly launch a simple Nginx-based service.

What is Kubernetes?

Kubernetes (K8s) is an open source cloud management software. It is a container management platform that automates the deployment, scaling and management of cloud applications. Kubernetes allows the creation of flexible and scalable container-based systems that are easy to manage and enable rapid component replacement.

What is AWS EKS?

AWS Elastic Container Service for Kubernetes (Amazon EKS) is an service that makes it easy to run and scale a Kubernetes cluster on the AWS platform. Amazon EKS is compatible with the original Kubernetes software and allows you to run applications without having to manage the infrastructure yourself. Amazon EKS automates many of the tasks associated with maintaining and scaling Kubernetes clusters, allowing you to focus on building and running applications.

Preparations

Before getting started, you need to create an account in AWS and configure the AWS CLI tool. I have prepared a tutorial on dev.to, in which I described the whole process: https://dev.to/aws-builders/create-a-free-aws-account-and-configure-the-aws-cli-1khf .

Helm

Another tool will be Helm. This is a tool used to manage applications running in a cluster of containers in a Kubernetes environment. Helm allows you to easily install, update and remove applications, as well as manage them with configuration sets called “charts.”

The installation is done by running the following commands:

> curl -fsSL -o get_helm.sh https://raw.githubusercontent.com/helm/helm/main/scripts/get-helm-3

> chmod 700 get_helm.sh

> ./get_helm.shAfter installation, we should check if the application is working properly. To do this, we run the following command, which should show the currently used version of Helm.

> helm version

version.BuildInfo{Version:"v3.8.2", GitCommit:"6e3701edea09e5d55a8ca2aae03a68917630e91b", GitTreeState:"clean", GoVersion:"go1.17.5"}Kubectl

The next tool we will install will be Kubectl. It is a tool that allows you to manage cluster resources from the command line. In this way, developers and administrators can deploy, scale and manage applications running in a Kubernetes cluster by issuing command-line commands on one or more nodes in the cluster.

We install the tool using the following commands:

> curl -LO https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubectl

> chmod +x ./kubectl

> sudo mv ./kubectl /usr/local/bin/kubectlAfter installation, we also verify that the tool works properly by checking its version.

> kubectl version

Client Version: version.Info{Major:"1", Minor:"21+", GitVersion:"v1.21.2-13+d2965f0db10712", GitCommit:"d2965f0db1071203c6f5bc662c2827c71fc8b20d", GitTreeState:"clean", BuildDate:"2021-06-26T01:02:11Z", GoVersion:"go1.16.5", Compiler:"gc", Platform:"linux/amd64"}Eksctl

The last tool needed to run the cluster will be Eksctl. This is a command-line tool for creating, managing and scaling Amazon Elastic Kubernetes Service (EKS) clusters. With exctl you can easily create and delete clusters, add and remove nodes, manage cluster resources and applications, and monitor performance.

Here, installation also requires running some instructions.

> curl --silent --location "https://github.com/weaveworks/eksctl/releases/latest/download/eksctl_$(uname -s)_amd64.tar.gz" | tar xz -C /tmp

> sudo mv /tmp/eksctl /usr/local/binAs with previous tools, we check the version to confirm that the tool works.

> eksctl version

0.139.0Launch of EKS cluster

Once all the tools are ready, we can proceed to launch our cluster. A single instruction is sufficient for this:

> eksctl create cluster \

--name nginx-cluster \

--version 1.25 \

--region eu-central-1 \

--nodegroup-name k8s-nodes \

--node-type t2.micro \

--nodes 2When starting the cluster, we need to provide additional information using flags. Below I provide an explanation of these flags:

- –name – The name of the EKS cluster. Must be unique within the AWS region.

- –region – The AWS region in which the cluster will be created.

- –version – The version of Kubernetes to be installed on the EKS cluster.

- –nodegroup-name – The name of the node group. A node group is a group of virtual machines that run in an EKS cluster.

- –node-type – The type of EC2 instance that will be used to run the cluster nodes.

- –nodes – The number of nodes in a node group. Default is 2.

After about 10 minutes, the cluster will be created and we will get information about it in the console:

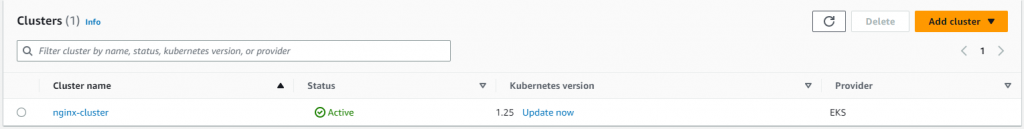

We can also view the status of the cluster in the AWS console: https://eu-central-1.console.aws.amazon.com/eks. After logging in, remember to check the region where the cluster is to be launched.

When you click on the cluster name, you will enter a page with details. A message may appear at the top of the page:”Your current IAM principal doesn’t have access to Kubernetes objects on this cluster.“. This message informs you that your root account does not have permissions to read information about objects in the cluster. To grant such permissions you should:

- copy the account id of our account (the id is available in the AWS console – top right corner, after clicking on the account name)

- run the following command in the console:

> kubectl edit configmap aws-auth -n kube-systemAfter running the command, the VI editor will appear, allowing you to edit the config map regarding authorization in AWS. Our task is to add the following code in the data node:

mapUsers: |

- userarn: arn:aws:iam::888253778090:root

groups:

- system:mastersAfter adding the new code, the Yaml with the configuration will look more or less like this (in [account_id] enter the id of your account):

apiVersion: v1

data:

mapRoles: |

- groups:

- system:bootstrappers

- system:nodes

rolearn: arn:aws:iam::[account_id]:role/eksctl-nginx-cluster-nodegroup-k8-NodeInstanceRole-XXXXX

username: system:node:{{EC2PrivateDNSName}}

mapUsers: |

- userarn: arn:aws:iam::[account_id]:root

groups:

- system:masters

kind: ConfigMap

metadata:

creationTimestamp: "2023-05-02T09:48:52Z"

name: aws-auth

namespace: kube-system

resourceVersion: "3220"

uid: XXXAfter saving the changes, we can go to the AWS console and verify that we already have access to the cluster details:

Installation of Nginx server on EKS cluster

After the cluster is up and running, we can proceed to install the Nginx server. To do this, we will use Helm Charts.

In the first step, we will add to our local repository instructions describing how to start the Nginx server. We do this by running the command:

> helm repo add bitnami-repo https://charts.bitnami.com/bitnami

"bitnami-repo" has been added to your repositoriesIn the next step, we already start the Nginx server itself with the command:

> helm install nginx-release my-repo/nginx

NAME: nginx-release

LAST DEPLOYED: Tue May 2 12:09:15 2023

NAMESPACE: default

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

CHART NAME: nginx

CHART VERSION: 14.1.0

APP VERSION: 1.24.0We can check the status of the installation with the following command, which will display information about kubernetes deployment:

> kubectl get deployment

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-release 1/1 1 1 53sUnder with the Nginx server will also be visible in the AWS console.

Expose Nginx server on EKS cluster to the world

Once the server is up and running, we would like to check that everything is working well. To do this, we can expose the sewer to the world by creating a service and loadbalancer for it. To do this, we need to run the following instruction:

> kubectl expose deployment nginx-release --type=LoadBalancer --name=nginx-service-loadbalancer

service/nginx-service-loadbalancer exposedNow we can retrieve information about the address of the load balancer with which we can connect to our service. We can do this using the instruction:

> kubectl get service/nginx-service-loadbalancer | awk {'print $1" " $2 " " $4 " " $5'} | column -t

NAME TYPE EXTERNAL-IP PORT(S)

nginx-service-loadbalancer LoadBalancer XXXXX.eu-central-1.elb.amazonaws.com 8080:31376/TCPThe EXTERNAL-IP column contains the address of our load balancer. Now we can type this address in the browser, adding port 8080 at the end, or we can run the CURL command, which will retrieve the page title for us.

> curl -silent XXXXX.eu-central-1.elb.amazonaws.com:8080 | grep title

<title>Welcome to nginx!</title>Bravo! You just launched your first service on EKS.

Removing the cluster

If you want to remove the EKS cluster it is best to use the exclt tool again:

> kubectl delete service nginx-service-loadbalancer

service "nginx-service-loadbalancer" deleted

> eksctl delete cluster --name nginx-cluster --region eu-central-1

2023-05-02 12:34:10 [ℹ] waiting for CloudFormation stack "eksctl-nginx-cluster-nodegroup-k8s-nodes"

2023-05-02 12:34:11 [ℹ] will delete stack "eksctl-nginx-cluster-cluster"

2023-05-02 12:34:11 [✔] all cluster resources were deletedSummary

In the article, I discussed how to deploy your application to an EKS cluster. Preparing the tools for this process is not difficult and requires only a few steps.

Remember, however, that this is the beginning of the adventure of launching and managing applications in the cloud. Once your application is up and running, you need to take care of things like monitoring and effectively deploying changes via CI/CD. Click here for a tutorial describing how to build your application in AWS CodeBuild How to prepare the first CI project in AWS CodeBuild?

Be First to Comment